|

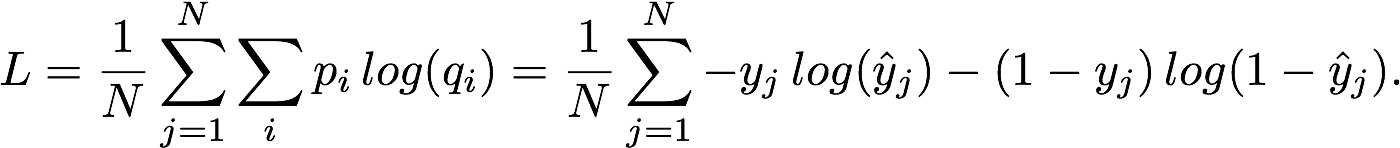

2/16/2023 0 Comments Shannon entropyThe number of outcomes at a level, is also based on the probability, where the number of outcomes at a level equals one divided by the We can simplify this by saying that the number of bounces equals the logarithm base two of the number of outcomes at that level. The difference is, how do we express number of bounces in a more general way? As we've seen, number of bounces depends how far down the tree we are. Summation for each symbol, of the probability of that symbol times the number of bounces. We can arrive at the same result using our bounce analogy. Of a fair coin flip, and he calls this "the bit", which is equivalent to a fair bounce. The unit of entropy Shannon chooses, is based on the uncertainty Claude Shannon calls this measure of average uncertainty "entropy", and he uses the letter H to represent it. Uncertainty, or surprise, about it's output, and that's it. Is producing less information because there is less Guess a hundred symbols from both machines, we can expect to ask 200 questions for Machine One, and 175 questions for Machine Two. We need to ask 1.75 questions on average, meaning if we need to So Machine One requires twoīounces to generate a symbol, while guessing an unknown The expected number of questions is equal to the expected

Notice the connection between yes or no questions and fair bounces. Symbol A times one bounce, plus the probability ofī times three bounces, plus the probability ofĬ times three bounces, plus the probability The expected number of bounces is the probability of Now we just take a weightedĪverage as follows. In this case, the firstīounce leads to either an A, which occurs 50% of the time, or else we lead to a second bounce, which then can either output a D, which occurs 25% of the time, or else it leads to a third bounce, which then leads to eitherī or C, 12.5% of the time. Based on where the disc lands, we output A, B, C, or D. That we have two bounces, which lead to fourĮqually likely outcomes. To add a second level, or a second bounce, so Based on which way it falls, we can generate a symbol. Let's assume instead we want to build Machine One and Machine Two, and we can generate symbols by bouncing a disc off a peg in one of two equally likely directions. On average, how many questions do you expect to ask, to determine a symbol from Machine Two? This can be explained Otherwise, we have to ask a third question to identify which of the Otherwise, we are left with two equal outcomes, D or, B and C We could ask, "is it D?". We could start by asking "is it A?", if it is A we are done, only one question in this case. Here A has a 50% chance of occurring, and all other letters add to 50%. Probability of each symbol is different, so we can ask What about Machine Two? As with Machine One, we could ask two questions to determine the next symbol. We can say the uncertainty of Machine One is two questions per symbol. So we simply pick one, such as "is it A?", and after this second question, we will have correctly Of the possibilities, and we will be left with two After getting the answer, we can eliminate half For example, our first question, we could ask if it is any two symbols, such as "is it A or B?", since there is a 50% chance of A or B and a 50% chance of C or D. Is to pose a question which divides the possibilities in half. You would expect to ask? Let's look at Machine One. Is the minimum number of yes or no questions If you had to predict the next symbol from each machine, what

More information? Claude Shannon cleverly Machine One generatesĮach symbol randomly, they all occur 25% of the time, while Machine Two generates symbols according to the following probabilities. They both output messages from an alphabet of A, B, C, or D.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed